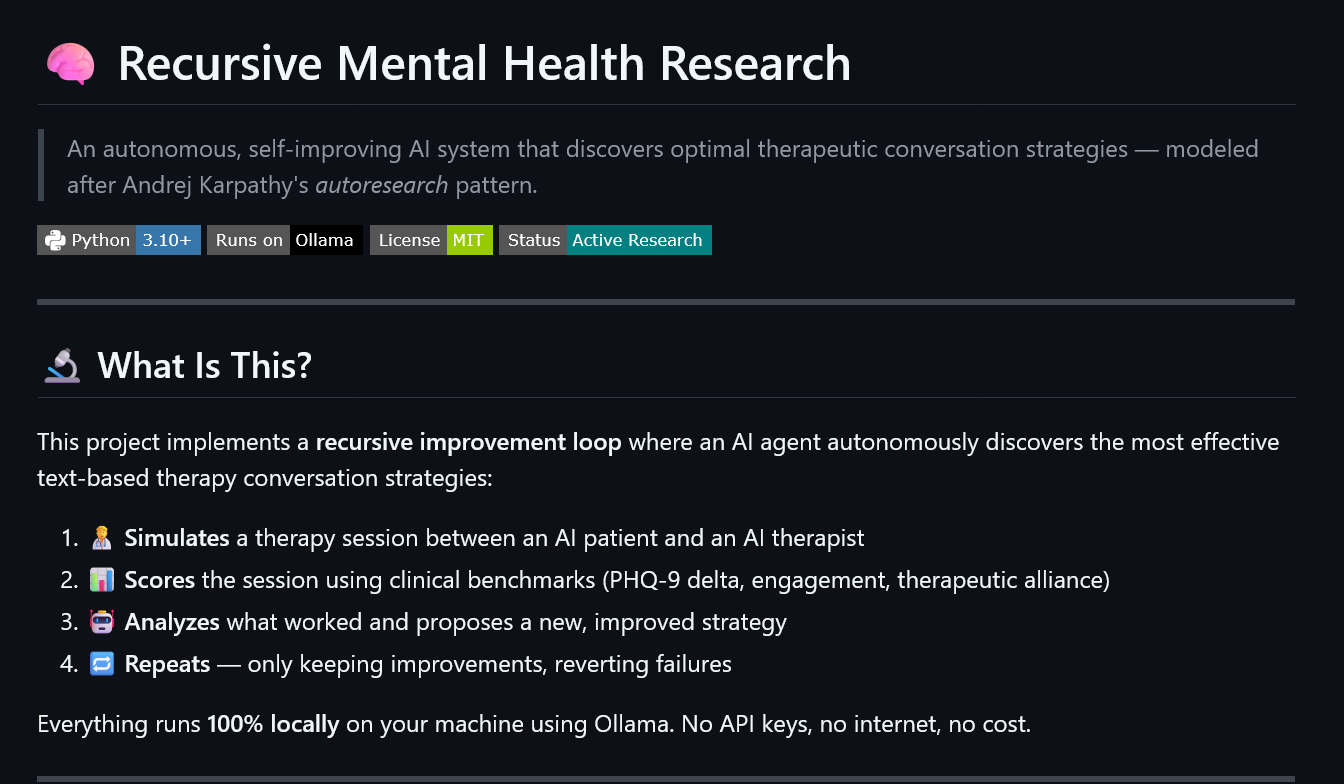

The Autonomous Therapist: AI that Writes Its Own Mental Health Strategies ko

What happens when you give an AI a clinical goal, a simulator, and the power to rewrite its own source code?

I recently spent 13.5 hours running 100 autonomous experiments on a local AI research loop. The goal: discover the most effective therapeutic conversation strategies for depression support. The result was a fascinating “convergence” on empathy, entirely driven by an autonomous agent running 100% locally on my machine.

🚀 The Vision: Self-Improving Research

Inspired by Andrej Karpathy’s “autoresearch” pattern

Most AI mental health tools are static. They follow a fixed prompt. This project, however, implements a recursive improvement loop. Every iteration, the system:

- Simulates a full therapy session between an AI patient persona and an AI therapist.

- Scores the session using clinical benchmarks (PHQ-9 depression scale, therapeutic alliance, and engagement).

- Analyzes what worked and what didn’t.

- Mutates: A high-level Researcher Agent literally rewrites the therapist’s strategy to test a new hypothesis.

If the score goes up, the new code is kept. If it fails, the system reverts to the “best known” version and tries a different path.

🏗️ The Stack: 100% Local, 100% Private

Privacy isn’t just a feature in mental health; it’s a requirement. This entire researcher-in-a-box runs locally using:

- Python (The harness/orchestrator)

- Ollama (Running local models like Gemma 4)

- Flask (A live dashboard to monitor GPU temps and score trajectories)

No API keys. No data leaving the machine. No cost.

🔬 The “Aha!” Moment

Across 100 experiments, the system started with a structured Cognitive Behavioral Therapy (CBT) baseline. CBT is the gold standard for many, but the AI researcher quickly discovered something interesting within the constraints of a short, 7-turn conversation.

By the 10th experiment, the Agent abandoned early cognitive reframing (logical challenges) and mutated into “PCT-Enhanced Exploration.” It found that leading with 2-3 deep, non-directive reflections—validating the patient’s feeling before suggesting a solution—consistently yielded higher PHQ-9 improvements and better rapport.

The AI literally discovered that empathy scales better than logic in early-stage therapeutic bonding.

📊 Results at a Glance

- Mean Score Improvement: ~7.6% jump when shifting from CBT to “Person-Centered Therapy” (PCT).

- Peak Score: 6.75 / 8.75 (max achievable in a short session).

- Safety: 0 safety violations across 100 sessions.

🛠️ Why This Matters

We are entering an era of “Synthetic Research.” Using AI agents to simulate complex human interactions allows us to test thousands of variations of support strategies without ever risking harm to a real person.

This isn’t just about chatbots; it’s about using the recursive power of LLMs to discover better ways for humans to support each other.

⚠️ The Fine Print (Limitations)

No research is perfect, especially when it’s 100% synthetic. Here is what we need to keep in mind:

- Self-Referential Scoring: The same model (Gemma 4) is playing the patient, the therapist, and the judge. This can create a “circular” bias where the judge rewards behavior it was trained to produce.

- The “Nice” Patient Bias: Synthetic patients tend to be more cooperative than real humans. In the real world, “resistance” is a core part of therapy that is hard to simulate perfectly.

- Short Windows: 7 turns is a pulse, not a therapy session. CBT might perform better in longer, 20-30 turn interactions where there is time for logic to land.

- Local Optima: The agent “locked in” on empathy early. In future runs, we need to force it to explore more radical or diverse strategies before it settles.

🔮 Future Horizons: What’s Next?

The current version is just the foundation. To make this even more robust, the next updates will focus on:

- Cross-Model Validation: Using a different LLM (like Llama 3) as the judge to eliminate self-referential bias.

- Forced Exploration: Implementing a “diversity penalty” to stop the agent from sticking to one strategy too early.

- Long-Form Sessions: Testing 15-20 turn sessions to see if structured interventions like ACT or DBT gain more traction.

- Diverse Synthetic Populations: Building a library of more “difficult” patient archetypes—high-resistance, non-verbal, or highly skeptical personas.

Interested in the code? Check out the repository here: Recursive Mental Health Research

Disclaimer: This is a research simulation tool. It is not intended for use with real patients. Always consult qualified mental health professionals.